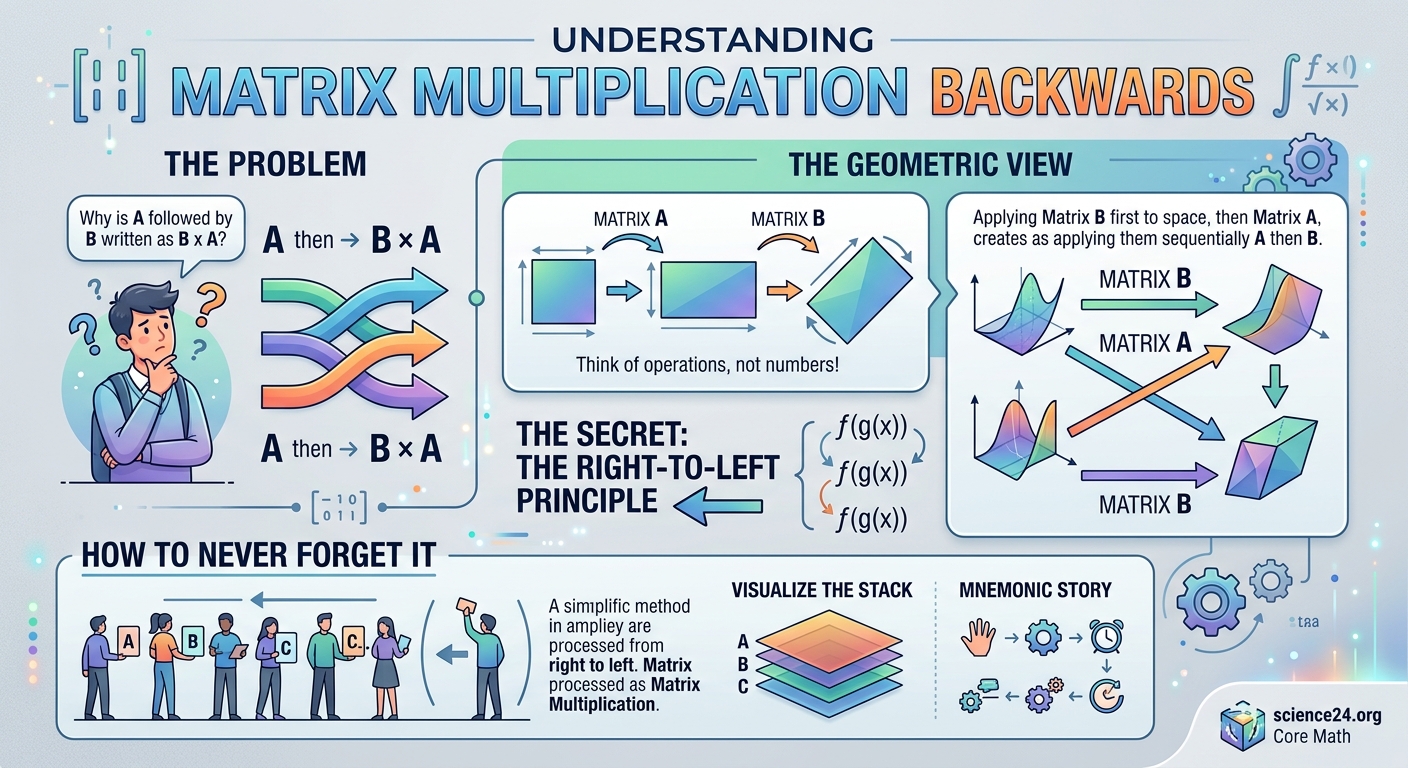

You’re staring at the expression (AB)x and your brain is screaming that B should happen first. The notation looks like it flows left to right, but the actual computation runs in reverse. This isn’t a quirk or a mistake. Matrix multiplication follows a right-to-left order for a profound mathematical reason, and once you understand it, you’ll never forget which transformation happens first.

Matrix multiplication appears backwards because matrices represent transformations that apply from right to left. When you write (AB)x, matrix B transforms x first, then A transforms the result. This right-to-left order matches function composition and preserves the logical sequence of operations. Understanding this connection to transformations makes the order intuitive rather than confusing, turning a memorization problem into a conceptual anchor you’ll never lose.

Matrices are transformations, not simple numbers

Think about what a matrix actually does. When you multiply a matrix by a vector, you’re transforming that vector into a new position or orientation. A 2×2 matrix might rotate a point, scale it, or reflect it across an axis.

The matrix isn’t just sitting there as a passive number. It’s an active operator that changes whatever comes after it.

This is fundamentally different from regular multiplication. When you write 3 × 5, both numbers are just values. The order matters for some operations, but conceptually they’re symmetric.

Matrices represent actions. And actions have a natural sequence.

Function composition runs right to left

Remember function notation from algebra? When you write f(g(x)), you apply g first, then f. The functions execute from the inside out, which means right to left when you read the expression.

This isn’t arbitrary. It matches how we naturally chain operations.

If you want to first double a number and then add five, you write f(g(x)) where g(x) = 2x and f(x) = x + 5. The result is f(g(x)) = 2x + 5.

Matrix multiplication follows the exact same logic. Writing (AB)x means “first apply B to x, then apply A to the result.”

The notation preserves the visual structure of function composition. Both run right to left because both represent sequential operations where the output of one becomes the input of the next.

Why the notation looks backwards at first

Your confusion is completely valid. Most mathematical notation flows left to right. We read equations from left to right. We solve problems step by step moving across the page.

But transformation chains are different. They stack operations in a way that prioritizes the final result.

Consider this practical example: you want to rotate a shape 90 degrees, then scale it by 2. In matrix notation, you’d write SR where S is the scaling matrix and R is the rotation matrix.

When you compute (SR)x, the rotation R applies to x first. Then the scaling S applies to the rotated result.

The leftmost matrix is the last operation. The rightmost matrix is the first operation.

This feels backwards until you realize you’re reading the transformation history from result to origin, not from start to finish.

A concrete example with transformations

Let’s use actual numbers. Suppose you have a point at (1, 0) and you want to rotate it 90 degrees counterclockwise, then scale it by 2.

The rotation matrix R is:

[0 -1]

[1 0]

The scaling matrix S is:

[2 0]

[0 2]

If you write (SR)x where x = [1, 0], here’s what happens:

- First, R transforms x: Rx = [0, -1] × [1, 0]ᵀ = [0, 1]ᵀ

- Then, S transforms that result: S(Rx) = [2, 0; 0, 2] × [0, 1]ᵀ = [0, 2]ᵀ

The point (1, 0) rotates to (0, 1), then scales to (0, 2).

Notice how the matrix closest to x acts first. The matrix farthest from x acts last.

This is identical to writing S(R(x)) in function notation. The innermost function R executes first.

How to remember the order forever

Stop thinking of matrix multiplication as “backwards.” Start thinking of it as “inside out.”

The matrix touching the vector is the first transformation. Each matrix to the left is another layer wrapping around the previous result.

Here’s a memory anchor that works for most students: imagine you’re getting dressed. If you put on a shirt (S) and then a jacket (J), the expression is J(S(you)). The shirt touches you first. The jacket goes on last but appears leftmost in the notation.

Another approach: read the transformation chain from right to left out loud. For (ABC)x, say “first C, then B, then A.” Make it a habit every time you see a matrix product.

The more you verbalize the right-to-left sequence, the more automatic it becomes.

Common mistakes students make

Understanding why the order matters doesn’t mean you’ll never make errors. Here are the patterns that trip people up most often:

| Mistake | Why it happens | How to fix it |

|---|---|---|

| Computing AB instead of BA | Reading left to right by habit | Always start with the matrix closest to the vector |

| Forgetting associativity | Thinking parentheses change the transformation order | Remember (AB)C = A(BC) but AB ≠ BA |

| Mixing up row and column operations | Confusing which dimension must match | Columns of the left matrix must equal rows of the right matrix |

| Applying transformations in the wrong sequence | Not checking which matrix touches the data | Trace the computation from the vector outward |

The most frequent error is computing transformations in the visual reading order instead of the mathematical execution order. You see AB and instinctively want A to happen first.

Fight that instinct. Train yourself to look at the rightmost element first.

Why programming makes this more confusing

If you’re coming from a programming background, matrix multiplication order feels especially backwards. Most code executes top to bottom, left to right.

When you write result = transform1(transform2(data)), you read it left to right but it executes inside out. That’s already a mental adjustment.

But then you see code like result = A @ B @ x in Python or result = A * B * x in MATLAB, and your brain wants to process A first.

The code syntax doesn’t visually distinguish between the transformation chain and the execution order. You have to hold both concepts in your head simultaneously.

Some libraries even reverse the order to make it feel more natural to programmers. Graphics APIs sometimes use column vectors (where matrices multiply from the left) or row vectors (where matrices multiply from the right).

This inconsistency across tools makes the underlying mathematical convention even more important to understand. The math is consistent. The implementations vary.

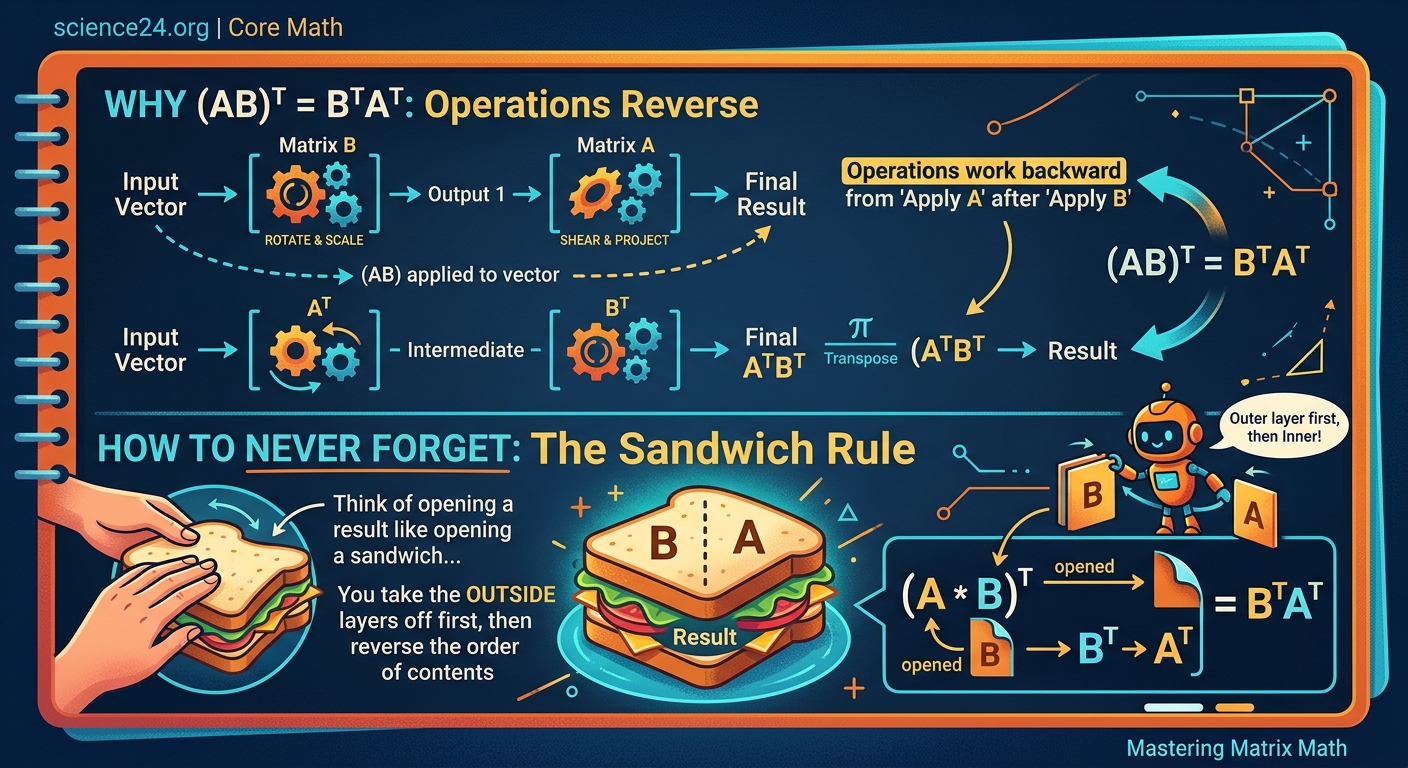

The deeper reason: composition preserves structure

Matrix multiplication order isn’t just a convention. It’s baked into how linear algebra preserves mathematical structure.

When you compose linear transformations, the order must match function composition to maintain consistency across all of mathematics. If matrix multiplication ran left to right, it would break compatibility with function notation, operator theory, and group theory.

Changing the order would require rewriting centuries of mathematical frameworks. The “backwards” notation is actually the forward-compatible choice.

This is similar to how understanding imaginary numbers without the confusion requires accepting that some mathematical structures extend our intuition rather than contradicting it.

The right-to-left order also makes certain proofs and theorems cleaner. Associativity, the chain rule in calculus, and coordinate transformations all work more elegantly with this convention.

Practical steps to internalize the order

Here’s a systematic approach to make the right-to-left order second nature:

- Every time you see a matrix product, identify the rightmost element first. Circle it if you’re working on paper.

- Trace the transformation chain outward from that element, labeling each step: “first this, then this, then this.”

- Before computing, write out the transformation sequence in words. For (ABC)x, write “C transforms x, B transforms Cx, A transforms BCx.”

- Practice with geometric transformations where you can visualize the result. Rotate, scale, and translate simple shapes to see the order in action.

- Work through examples where order matters. Compute both AB and BA to see that they give different results.

The goal is to build muscle memory. Your hand should automatically move to the rightmost matrix before you consciously think about it.

Repetition matters more than understanding at this stage. Do enough problems and the order becomes reflexive.

Connecting to other mathematical patterns

The right-to-left pattern appears throughout mathematics, not just in matrix multiplication. Recognizing these connections reinforces your intuition.

Function composition: f(g(x)) applies g first. Derivative chain rule: (f ∘ g)′(x) = f′(g(x)) · g′(x) evaluates the inner function first. Permutation multiplication: applying permutation σ then τ is written τσ.

All these conventions share the same logic. The operation closest to the input happens first. The operation farthest from the input happens last.

This pattern extends to abstract algebra. Group operations, ring operations, and category theory morphisms all follow right-to-left composition.

Once you see the pattern across multiple domains, matrix multiplication stops feeling like a special case. It’s part of a broader mathematical language.

When order doesn’t matter

Not all matrix products are order-dependent. Some special cases commute, meaning AB = BA.

Diagonal matrices commute with each other. A scalar multiple of the identity matrix commutes with any matrix. Rotation matrices around the same axis commute.

Recognizing these cases helps you spot shortcuts. If you know two matrices commute, you can rearrange them for computational convenience without changing the result.

But these are exceptions. The general rule is that matrix multiplication is non-commutative. Always assume AB ≠ BA unless you can prove otherwise.

This is another way matrix multiplication differs from regular number multiplication. With numbers, 3 × 5 = 5 × 3 always. With matrices, order almost always matters.

Building confidence through practice problems

Theory only takes you so far. You need to work through examples until the order becomes automatic.

Here are problem types that build intuition:

- Compute (AB)x and A(Bx) separately to verify they give the same result

- Calculate AB and BA to see they produce different matrices

- Apply three transformations in sequence and verify (ABC)x step by step

- Work backwards from a final result to determine what transformation sequence produced it

- Translate word problems into matrix expressions, focusing on operation order

Start with 2×2 matrices and simple vectors. The small size lets you focus on order without getting lost in arithmetic.

As you gain confidence, move to 3×3 matrices and more complex transformations. The principles stay the same regardless of size.

If you’re working through problem sets and making mistakes, that’s progress. Each error teaches your brain to catch the pattern. Just like common algebra mistakes become less frequent with practice, matrix order errors fade as you build experience.

Why this matters beyond linear algebra

Understanding matrix multiplication order isn’t just about passing a linear algebra exam. This concept appears throughout applied mathematics, computer science, and engineering.

Computer graphics use transformation matrices to position and animate objects. The order determines whether you rotate-then-translate or translate-then-rotate, which produces completely different results.

Machine learning uses matrix multiplication to propagate data through neural networks. The order of weight matrices defines the network architecture.

Physics uses matrices to represent quantum states and operators. The non-commutative nature of matrix multiplication reflects fundamental properties of quantum mechanics.

Control systems, robotics, signal processing, and data science all rely on matrix operations. Getting the order right isn’t pedantic. It’s the difference between a system that works and one that fails.

Making peace with mathematical notation

The “backwards” feeling eventually disappears. After enough practice, right-to-left order becomes as natural as reading function notation inside-out.

Your brain is adapting to a new pattern. That adaptation takes time and repetition. There’s no shortcut.

But here’s the good news: once you internalize this pattern, you’ll spot it everywhere. You’ll see how mathematical notation is actually remarkably consistent across different topics.

The notation isn’t backwards. Your initial intuition was based on reading text, not composing operations. You’re not learning an exception. You’re learning the rule.

Transforming confusion into clarity

Matrix multiplication runs right to left because matrices represent transformations, and transformations compose like functions. The matrix closest to the vector acts first. Each matrix to the left adds another layer.

This order isn’t arbitrary. It preserves mathematical structure across algebra, calculus, and beyond. Fighting the convention means fighting centuries of consistent notation.

Stop memorizing. Start visualizing. See each matrix as an action. Read the chain from the data outward. Practice until your hand moves to the rightmost matrix automatically.

The confusion you feel right now is temporary. Thousands of students have felt the same way and moved past it. You will too. Work through examples. Verbalize the order. Trust that repetition builds intuition.

The moment you stop thinking about which direction matrix multiplication goes is the moment you’ve truly learned it. That moment comes faster than you think.